Platforms designed for athletes are built on the wrong assumption.

WEVOLV · UX research

Athletes don't connect based on where they are in their career. They connect based on what's happening right now.

The research challenged my original hypothesis and pointed to something nobody was designing for. The phase between evaluating a connection and deciding to act on it.

Duration

7 Weeks

Methods

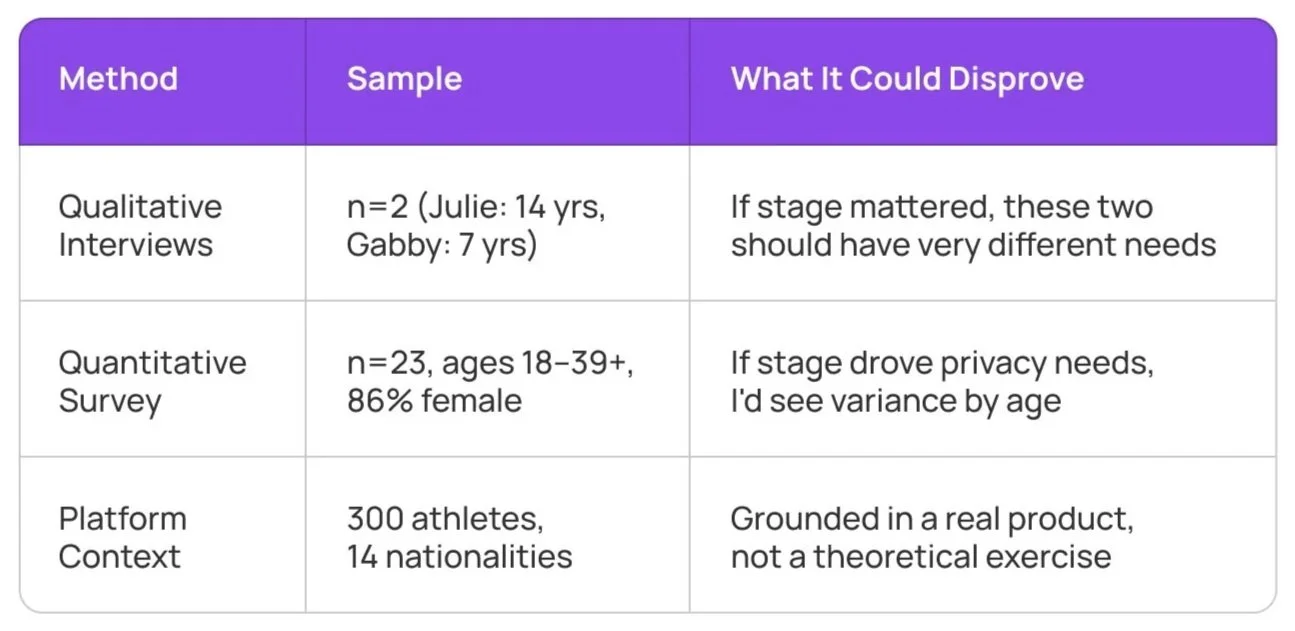

Qualitative interviews, Quantitative survey (n=23), Secondary research & competitive analysis

Tools

Notion, Miro, Figma

Maya had a country, a contract and one line of instructions.

Maya is 22. First professional contract in Spain. She doesn't speak the language and doesn't know anyone. Her agent's instructions for arrival were one line.

“Look for the tall guy at the airport.”

— Actual agent instruction for a first-time professional athlete abroad

She found a trainer on Instagram three weeks ago. She still hasn't messaged him. That's not hesitation. That's a rational response to missing infrastructure, and it's the thread that runs through everything this research found.

The platform WEVOLV already had 300 athletes across 14 nationalities. Three connections each. That's 270 honest peer reviews that don't exist yet before the platform even scales. The gap was already visible in the data.

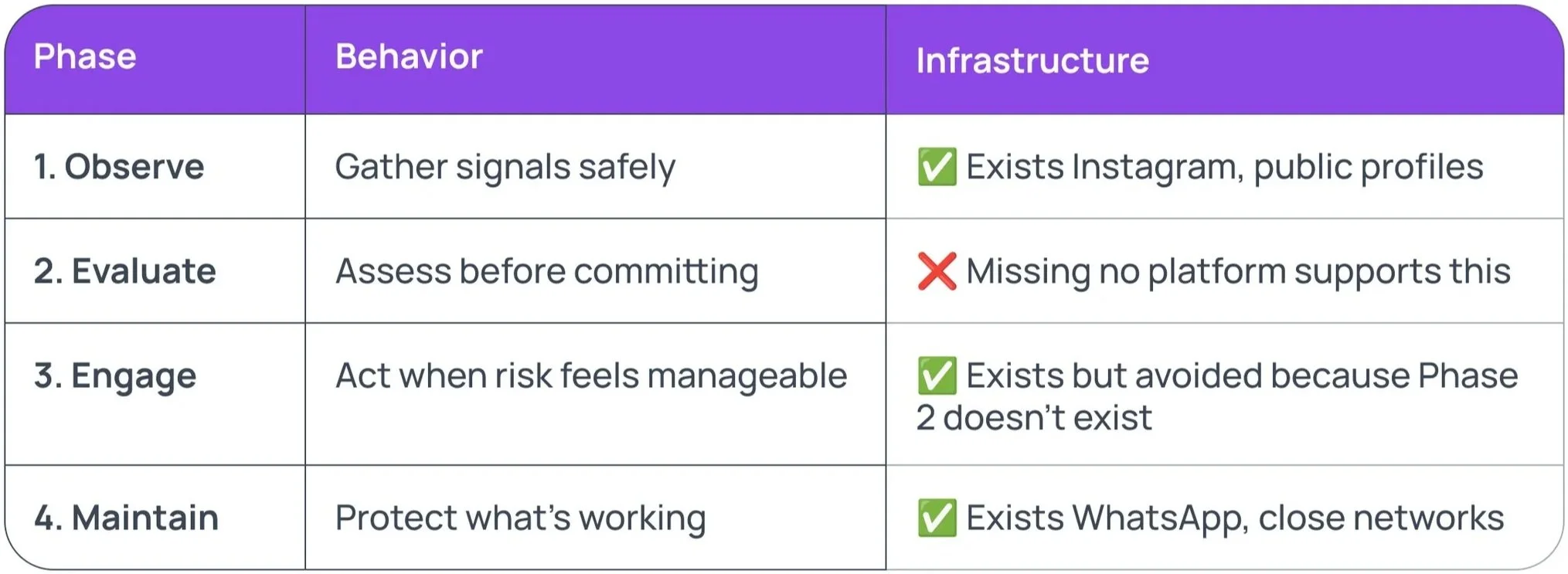

Athletes have Instagram, WhatsApp, LinkedIn and Snapchat. None of them fill the gap between observing and trusting.

Every platform gives athletes two options, go public and risk exposure, or stay disconnected and miss opportunities.

What athletes actually use:

Instagram ~90% use it for discovery. Doesn’t tell you if someone is trustworthy.

WhatsApp Direct communication, but only once trust already exists.

LinkedIn Built for résumés and job hunting, not for athletes navigating active careers abroad.

Snapchat Used to hide location from teams and agents. Damage control, not networking.

Three gaps compound across all of them:

No peer signal before engaging.

No low-effort way to test if a contact is worth pursuing.

No visibility control without manually managing every platform.

Instagram lets you observe. WhatsApp connects you once trust already exists. Nothing sits in between.

WEVOLV isn't the fifth platform. It's the missing layer that makes the other four work.

The brief came with a feature request. I came with questions.

What happened Both athletes had the same evaluation process despite very different experience levels. The survey showed zero variance by age. Both methods converged and contradicted my original hypotheses. A better model emerged.

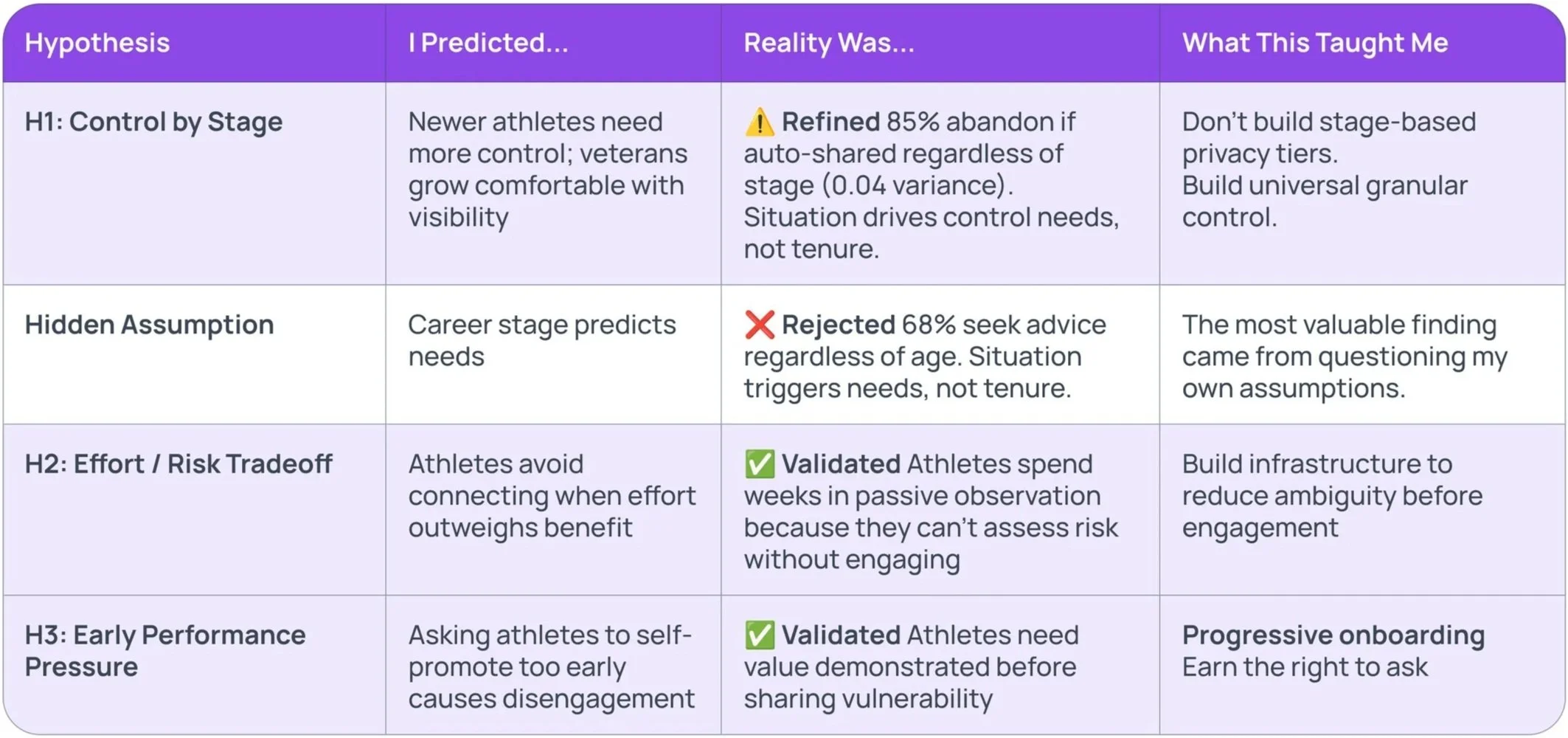

I went in with assumptions. The data replaced most of them.

The table shows the formal hypotheses and what the data actually returned. The most consequential row isn't a formal hypothesis. It's a hidden assumption embedded in the research design itself. Career stage doesn't predict what athletes need. The data showed zero variance by age. Rejecting that assumption changed the product direction more than any deliberate hypothesis did.

I found a behavior pattern nobody had mapped. The gap was always Phase 2.

Every interview and the survey data revealed the same pattern regardless of career stage, experience level or age.

Athletes can Observe. They can Engage. They can Maintain. The gap is Phase 2. The moment between watching someone on Instagram and deciding whether to message them. That's where 2–4 weeks of passive waiting lives.

That's what WEVOLV fills.

This model anchors every feature recommendation and every screen flow that follows.

I was wrong about athletes. Here’s exactly how.

Assumptions challenged:

Athletes were hesitant The data showed they were strategic. Missing infrastructure, not motivation, was the problem.

Career stage predicted needs Zero variance by age. Situation drives behavior, not experience level.

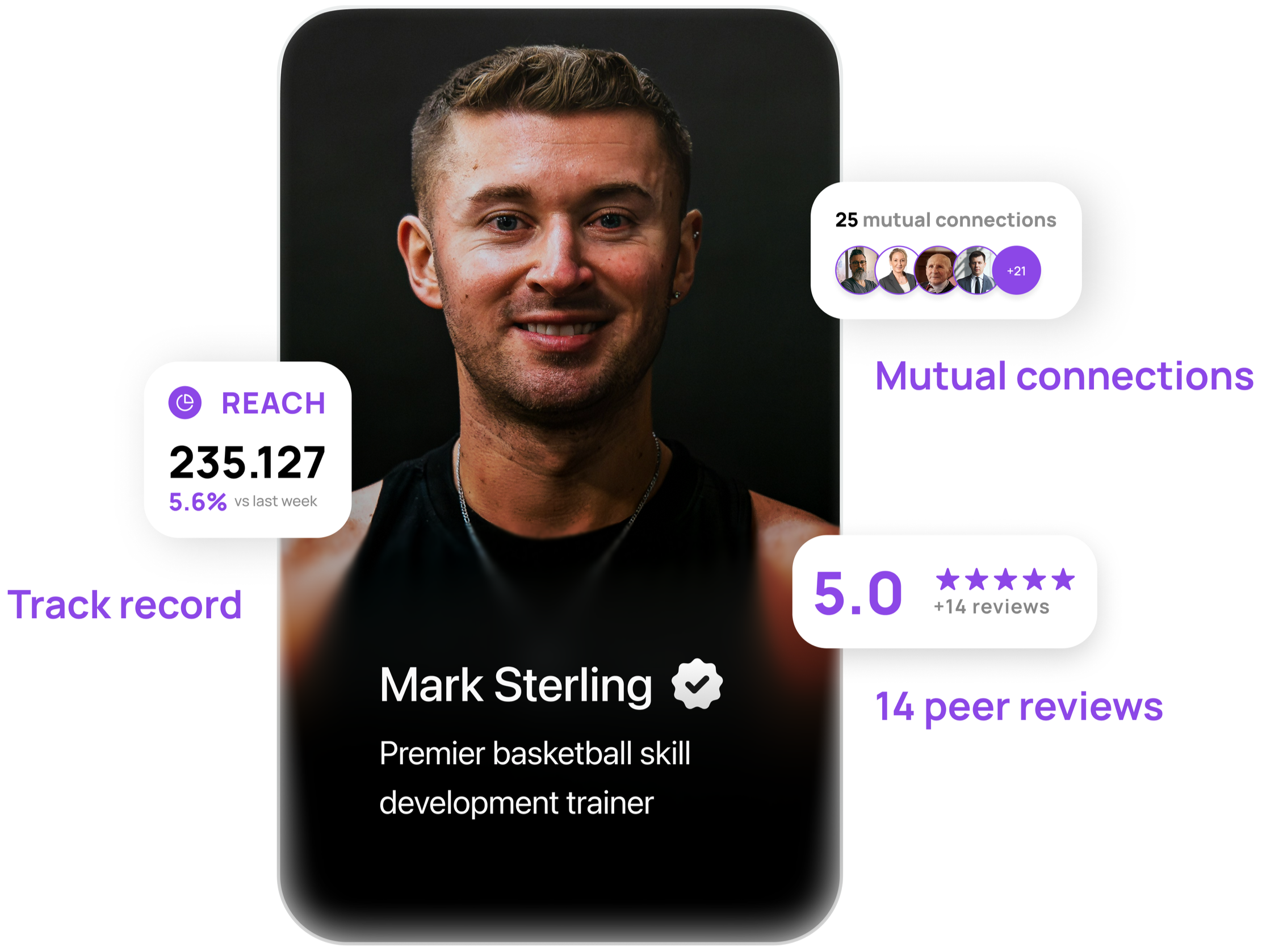

One trust signal anchored decisions Athletes triangulate. Peer reviews + connection depth + track record, all three required.

Control and openness were in tension Athletes with real control share more, not less. Control enables connection.

86% female sample was a limitation The data made it a strategic signal. Women's sports investment is up 300% since 2019.

I designed for two days, not two to four weeks.

She sees peer reviews from athletes in similar situations. Mutual connections with visible depth. Not just that a connection exists, but how close it actually is. His track record. She sets her own access terms before any contact happens.

She engages. Conditionally. If it doesn't work out, she ends access cleanly.

The full product flow shows how every research finding connects into one end-to-end system. View the product flow

Every recommendation I made traces back to a specific finding.

Build now

Trust score Peer reviews + connection depth + track record, visible before any engagement is required.

Three-tier privacy controls Inner Circle / Professional / General, universal from day one.

Situation-based matching “New to Belgium" not “7 years pro".

Anonymous feedback 30% fear retaliation; without truly untraceable reviews, trust scores get gamed by silence.

Relationship depth visibility A flat connection list creates context collapse; athletes need to know how close a mutual connection actually is.

Validate before building

AI-assisted conversation scaffolding Frameworks for hard professional conversations; concept test before building.

Proactive intelligence layer Agentic AI pre-processing profiles before the athlete opens the app; build the trust graph first, this depends on it.

Don't build

Public performance metrics.

Automated matching without context.

Generic community features.

The most valuable moment wasn’t a confirmed finding. It was the one that broke my assumption.

The finding that had immediate impact The client confirmed the trust triangulation finding stopped them from shipping a verified badge feature they were close to building.

What would have been built without this research Confirming the hesitant athlete assumption would have led to designing for motivation. Finding the strategic risk manager instead led to designing for infrastructure, cutting the time to a confident decision from weeks to days.

Every wrong assumption would have built the wrong product for Maya Behavioral truth was my quality bar. Every design decision had to earn its place. If it didn't match how athletes actually behave, it didn't make the cut.