Professional athletes playing abroad can’t afford to trust the wrong people.

WEVOLV · UX Design

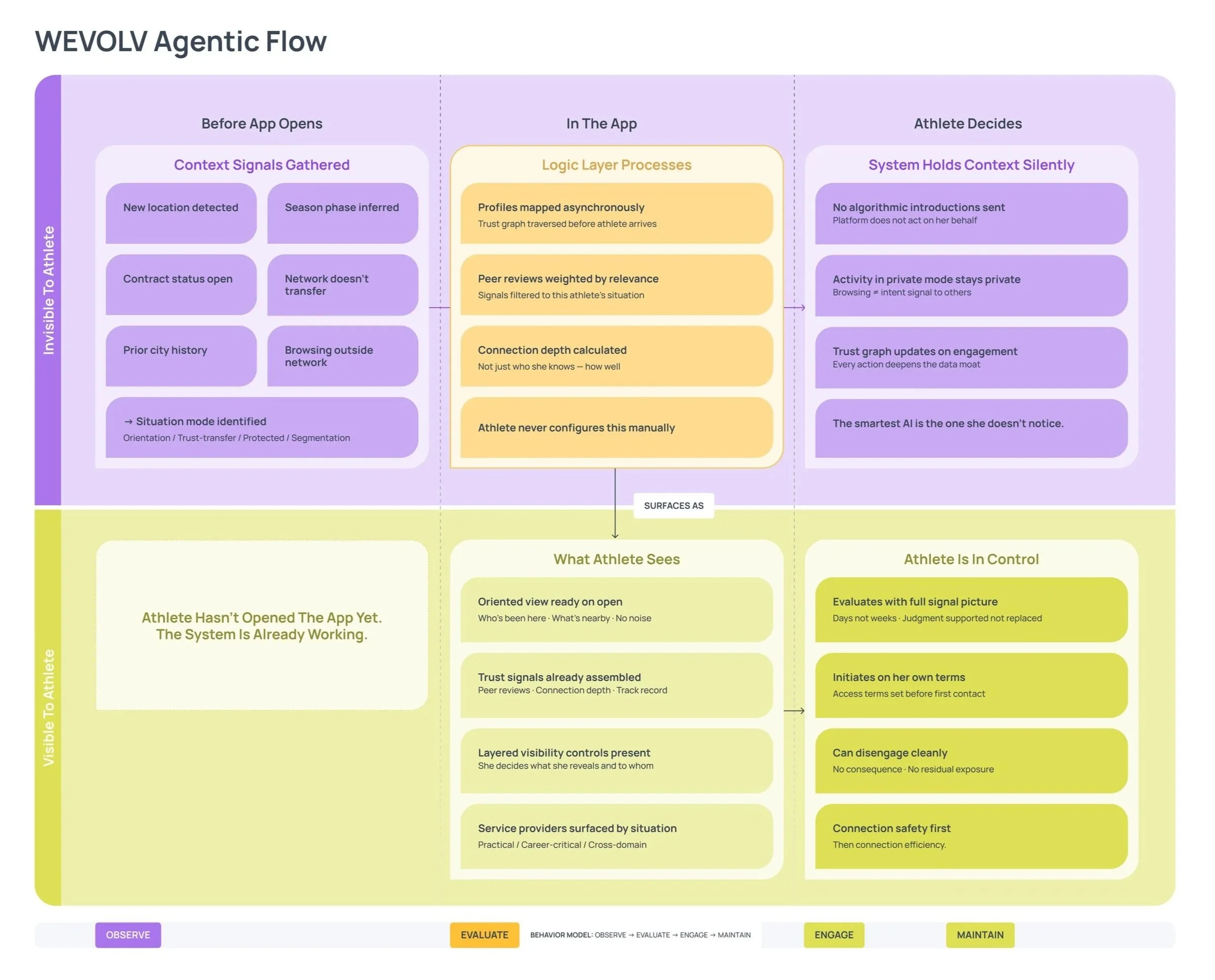

What athletes need shifts based on their situation, not their career stage. The system is designed around that. Working before she opens the app, surfacing what's relevant to her specific moment and earning trust before asking for anything.

Duration

Design 2 Weeks

Methods

Qualitative interviews, Quantitative survey (n=23), Secondary research & competitive analysis

Tools

Notion, Miro, Figma

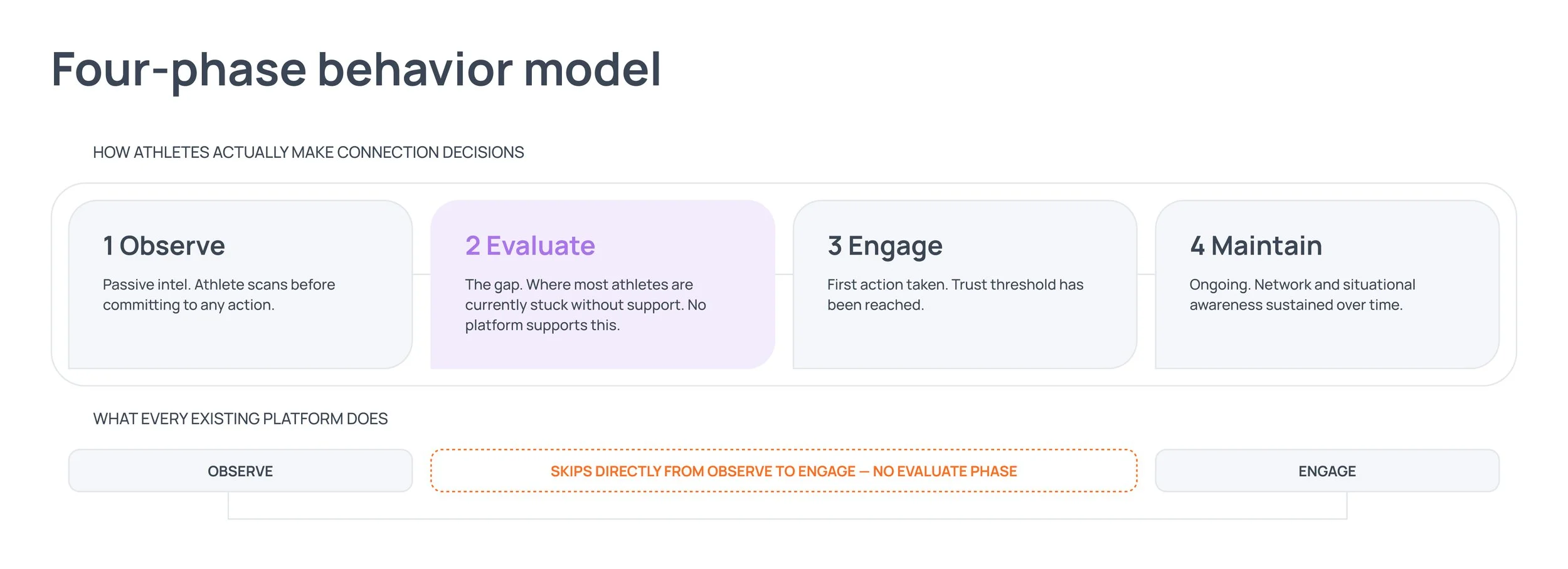

The phase athletes spend the most time in isn’t supported by any existing platform.

Every platform skips the evaluate phase.

Who the user is —Professional athletes managing contracts, clubs and connections in countries where they have no established network and no local context.

What athletes do before connecting —Two to four weeks of passive observation. Monitoring social media, asking around, piecing together second-hand intel. They're evaluating, but doing it alone.

The gap —Evaluate sits between awareness and engagement and doesn't exist in any product designed for athletes.

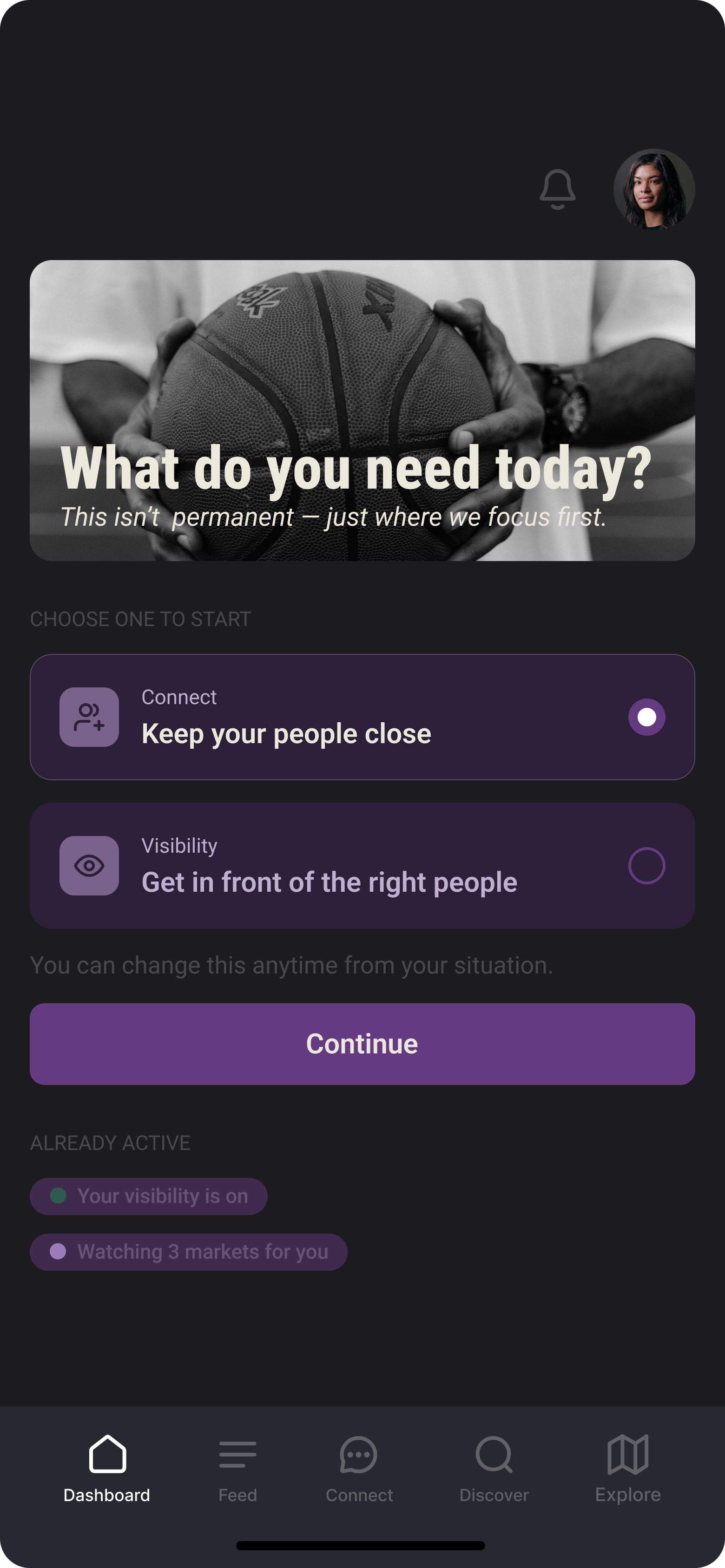

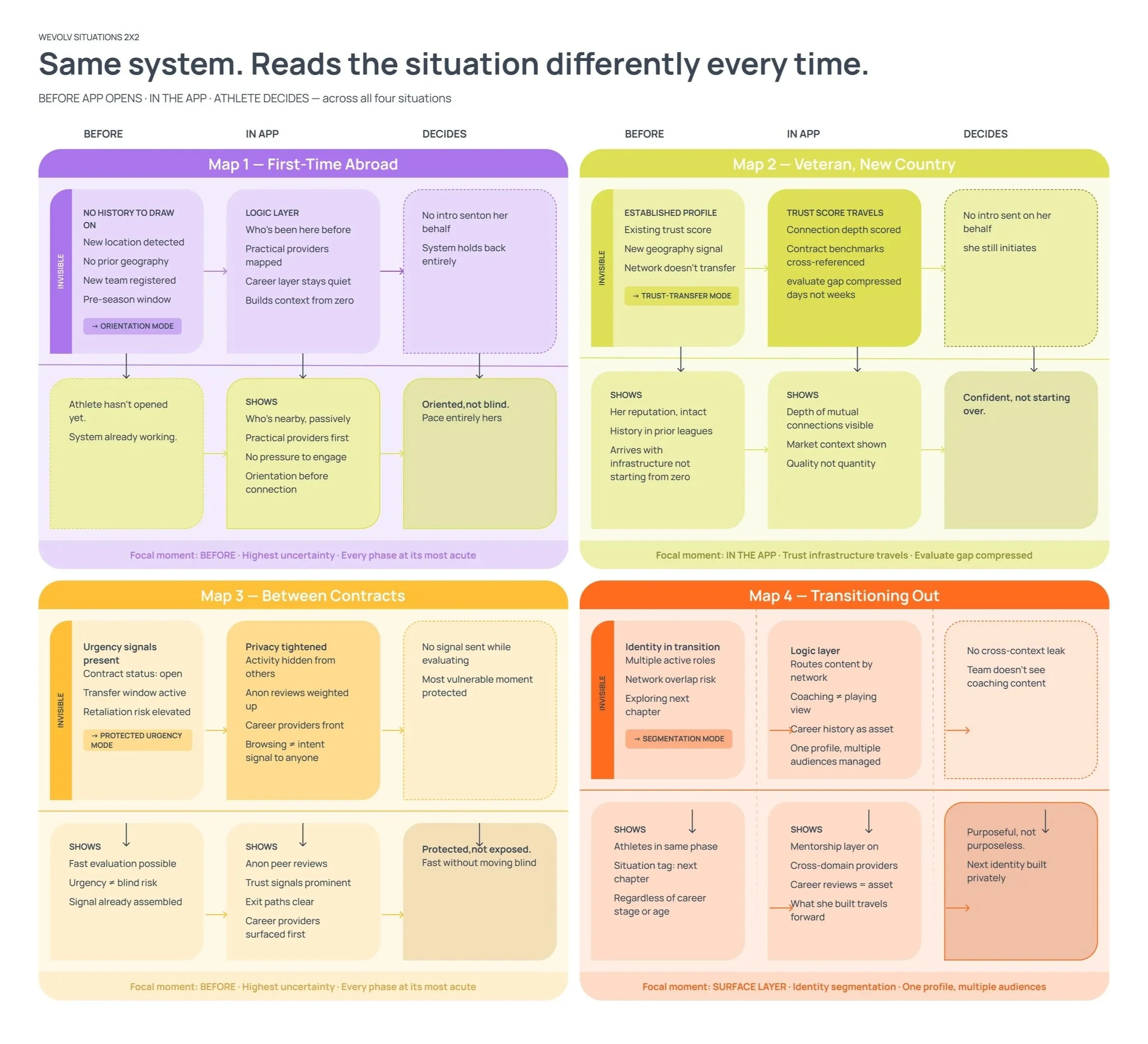

What the research revealed instead —Career stage doesn't predict what athletes need. Situation does. An athlete between contracts has completely different needs than a veteran settling into a new country, even if they're the same age, the same level, the same sport. The design had to account for four distinct situations. Each one produces different behavior from the system.

“The 4-phase model is the single most useful frame to come out of this engagement. We’ve been designing around activation and connection volume. Your research reframes the entire product as a decision platform. That’s the right frame.”

— Client feedback, WEVOLV

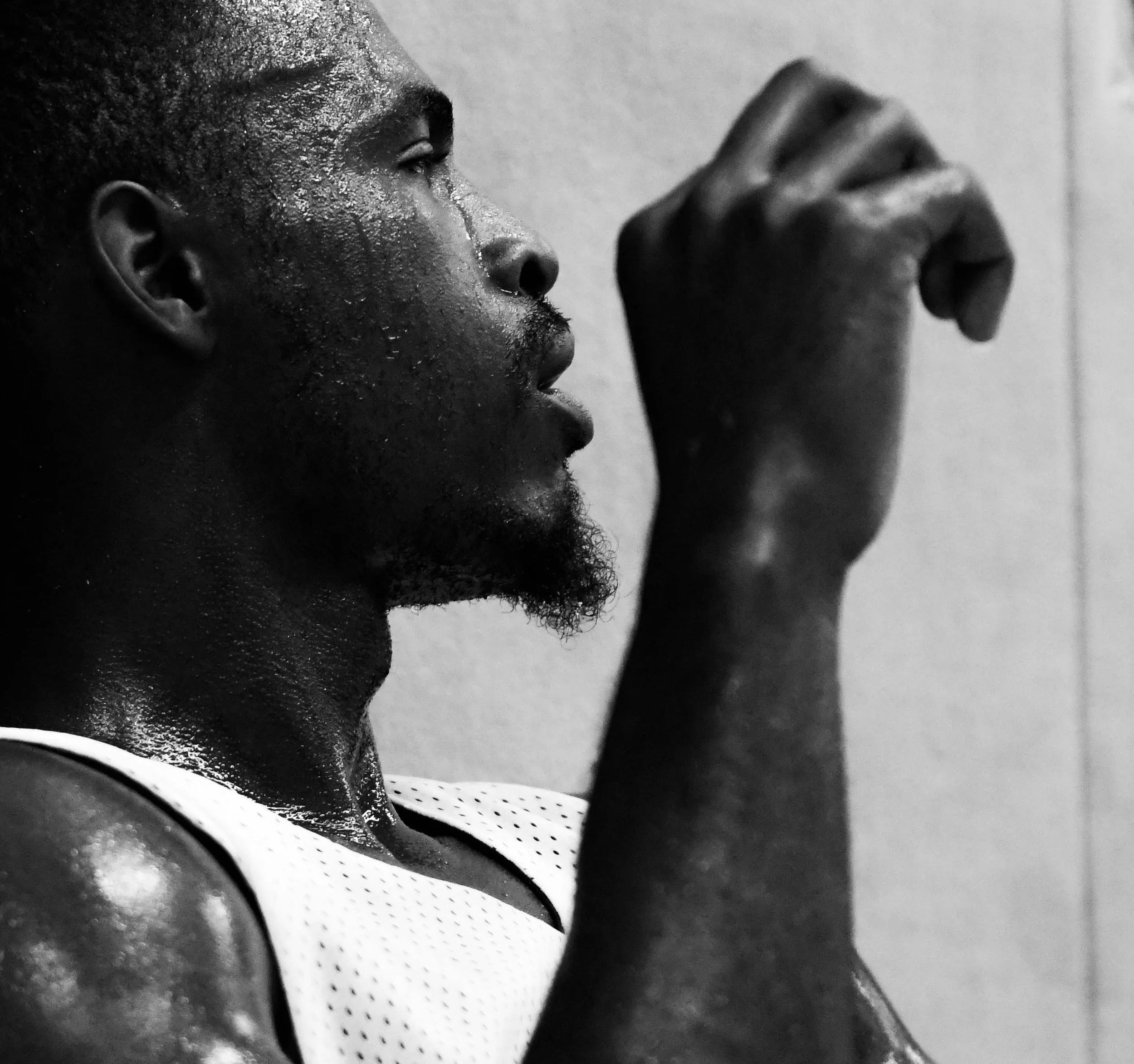

The research found something underneath the composure. That’s what I built for.

Gabby is a veteran. Seven seasons abroad. UK, Netherlands, Luxembourg, Belgium, Finland and Sweden. She knows how this works.

She's between contracts right now, training regularly, coaching to stay connected. From the outside, she looks like exactly what she is: a professional in strategic patience mode, staying ready until the right opportunity surfaces.

But the research found something underneath that composure. Gabby wasn't sure her visibility was actually reaching the right people. Not panic, just quiet, persistent uncertainty about whether the system was working for her or whether she was just waiting in the dark.

That's what this flow was designed for. Not the composed exterior. The uncertainty underneath it.

The system does the labor. She stays ready.

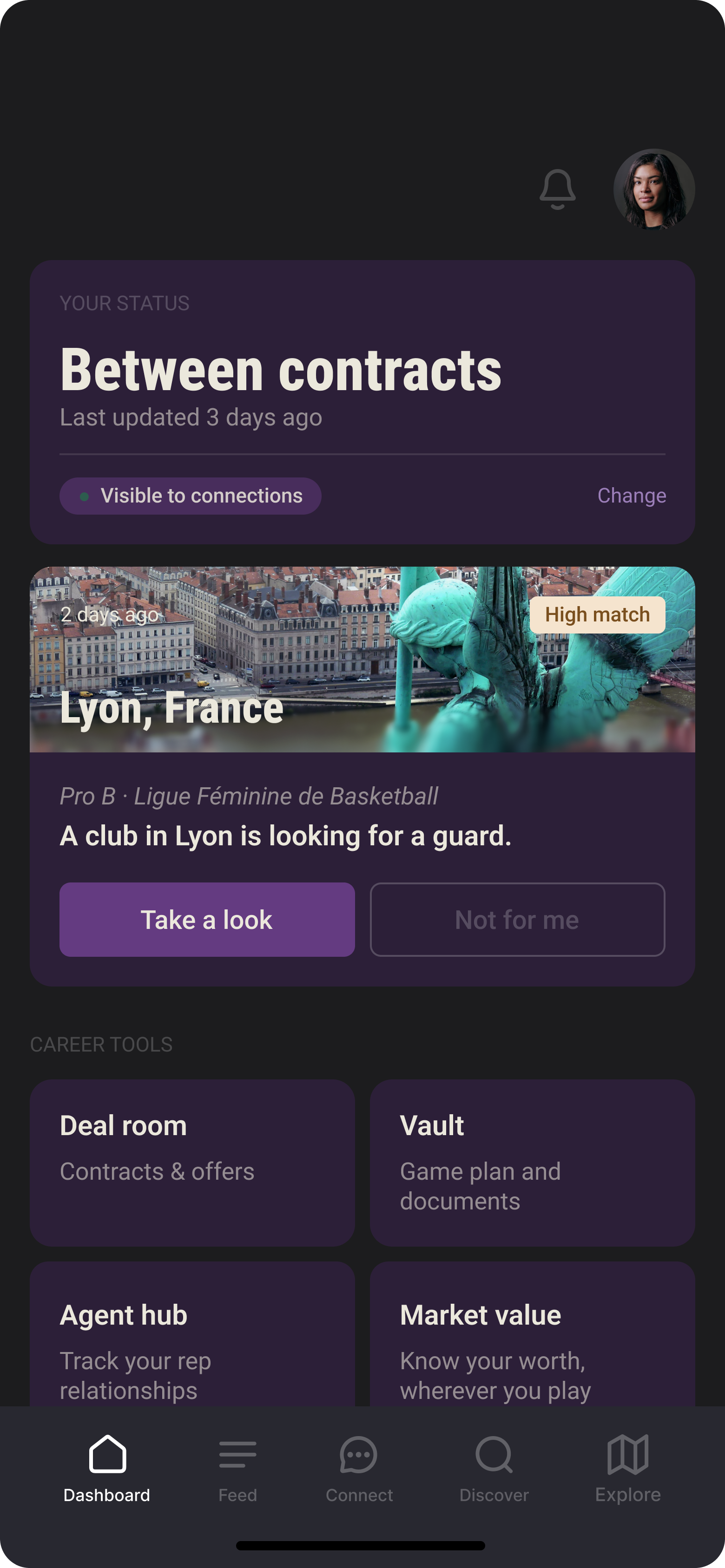

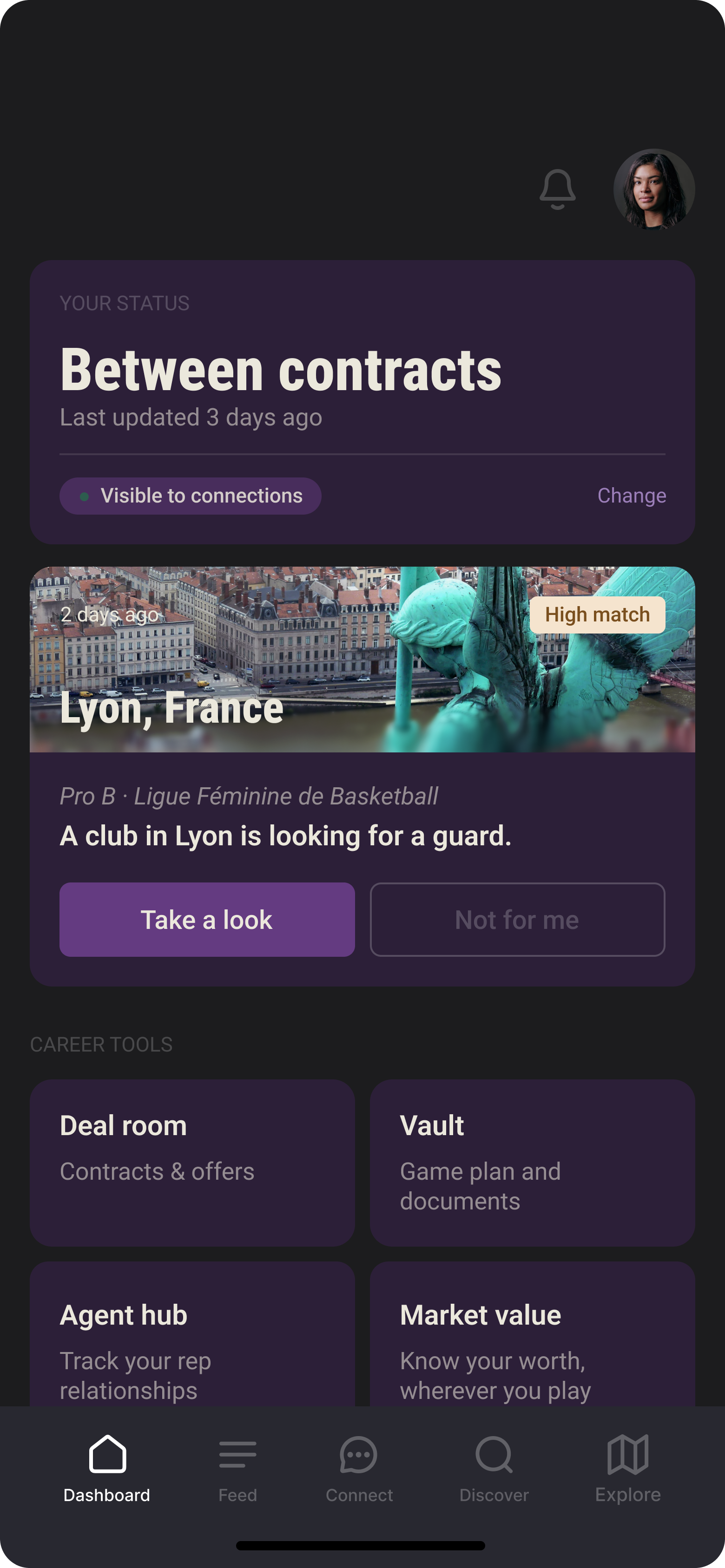

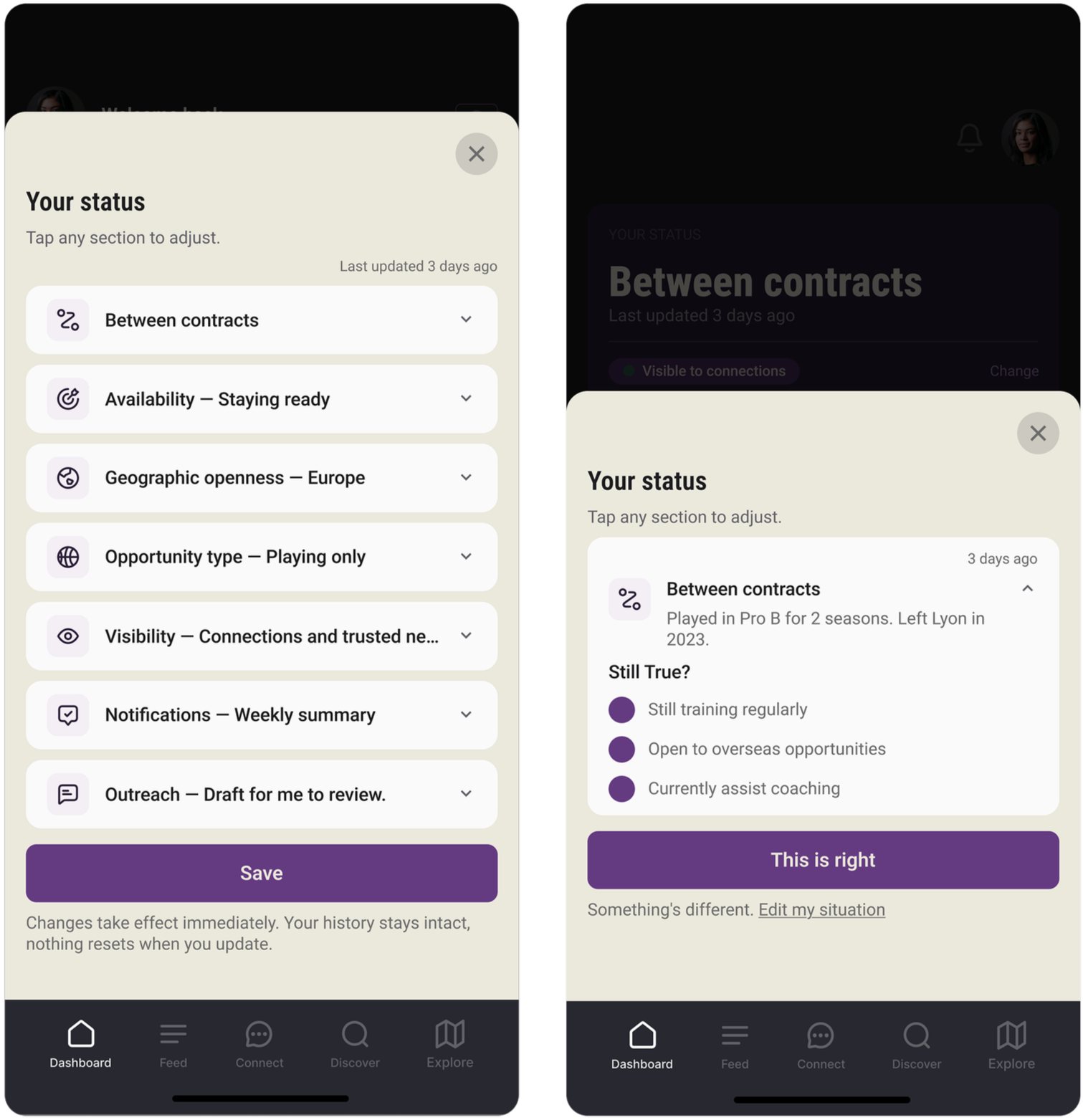

These screens follow Gabby through one specific moment: between contracts.

Every design decision responds to what she needs right now, not what athletes need in general.

Dashboard

Three athletes named visibility control unprompted. Not as a preference but something they needed to feel safe.

Onboarding

Athletes don't extend trust to a system before seeing it demonstrate accuracy. The onboarding earns it before asking for anything.

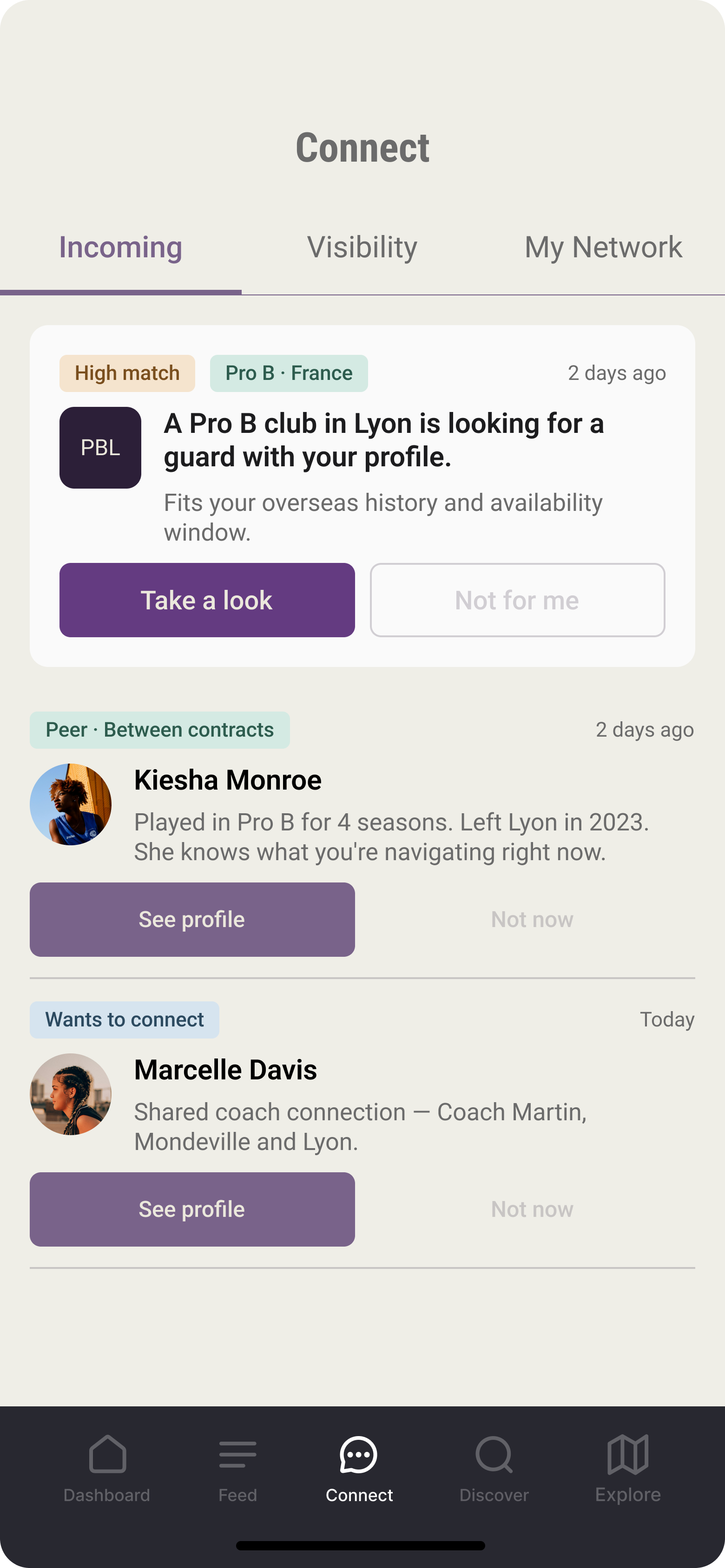

Connect

Interviews showed 2-4 weeks of passive observation before any contact. The card leads with the match reason so the system does that work, not her.

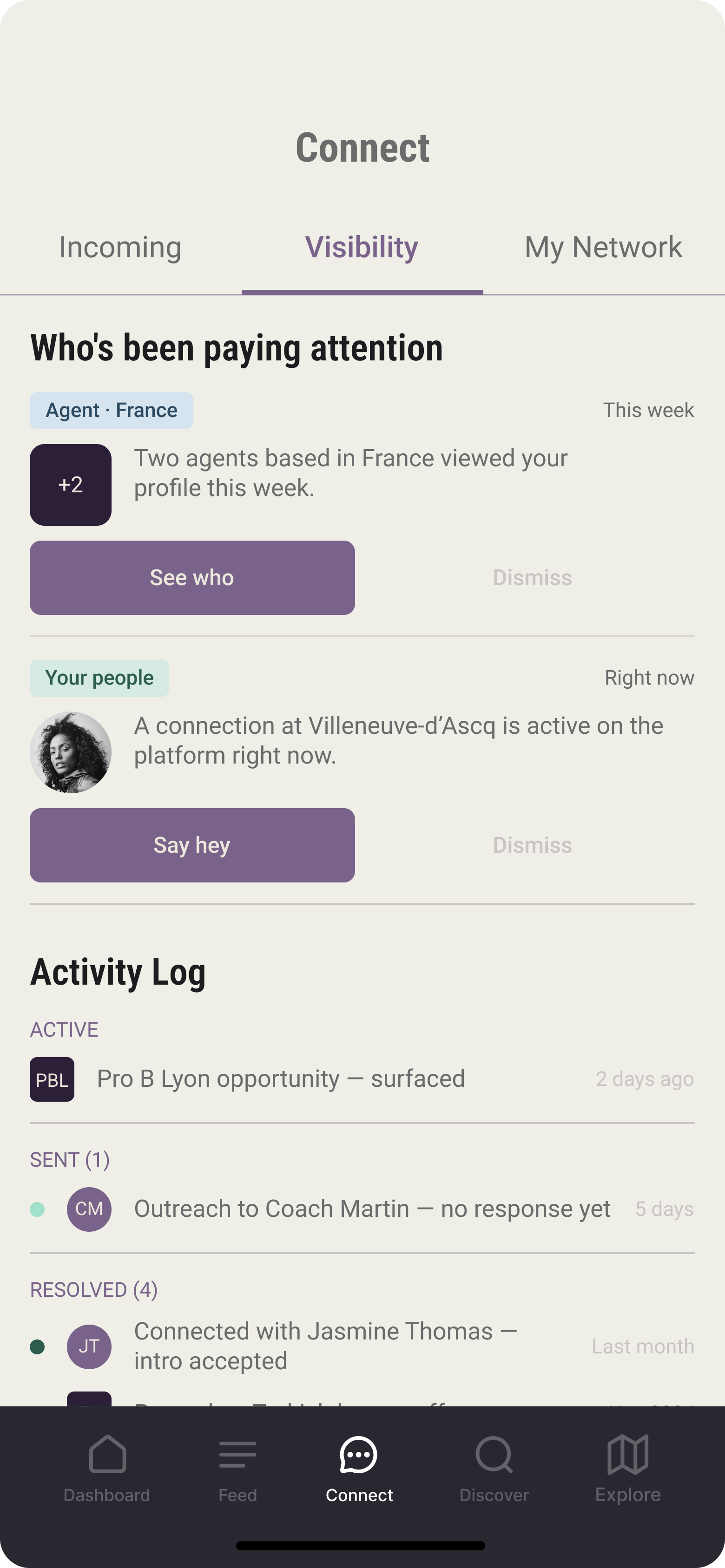

Visibility

Both veteran interviews surfaced the same quiet uncertainty. Was her visibility actually reaching the right people? Specific signals answer that. Reassurance copy doesn't.

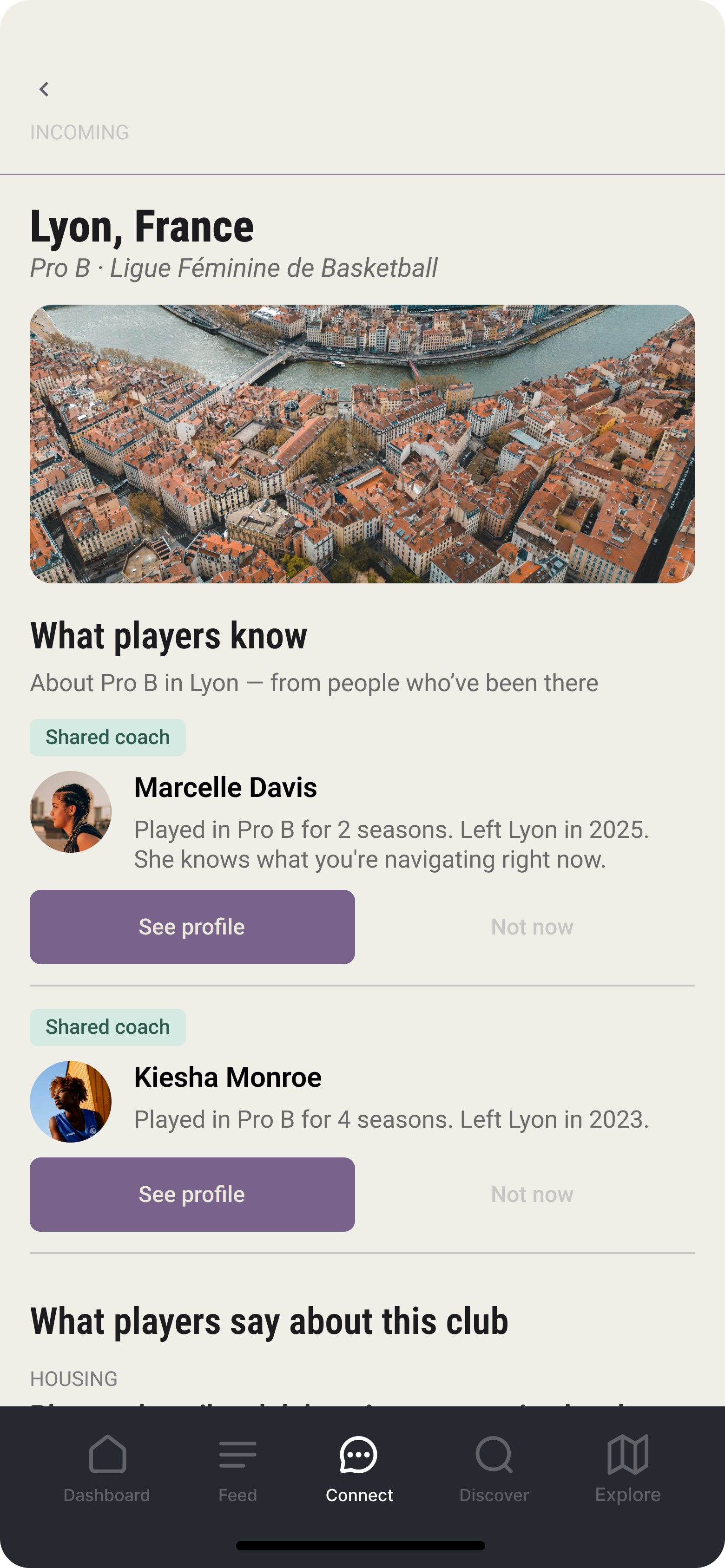

What players know

Athletes rely on informal peer networks for local intel. The research found being everyone's resource was its own kind of labor. The peer cards surface that knowledge without putting it on any one athlete to carry.

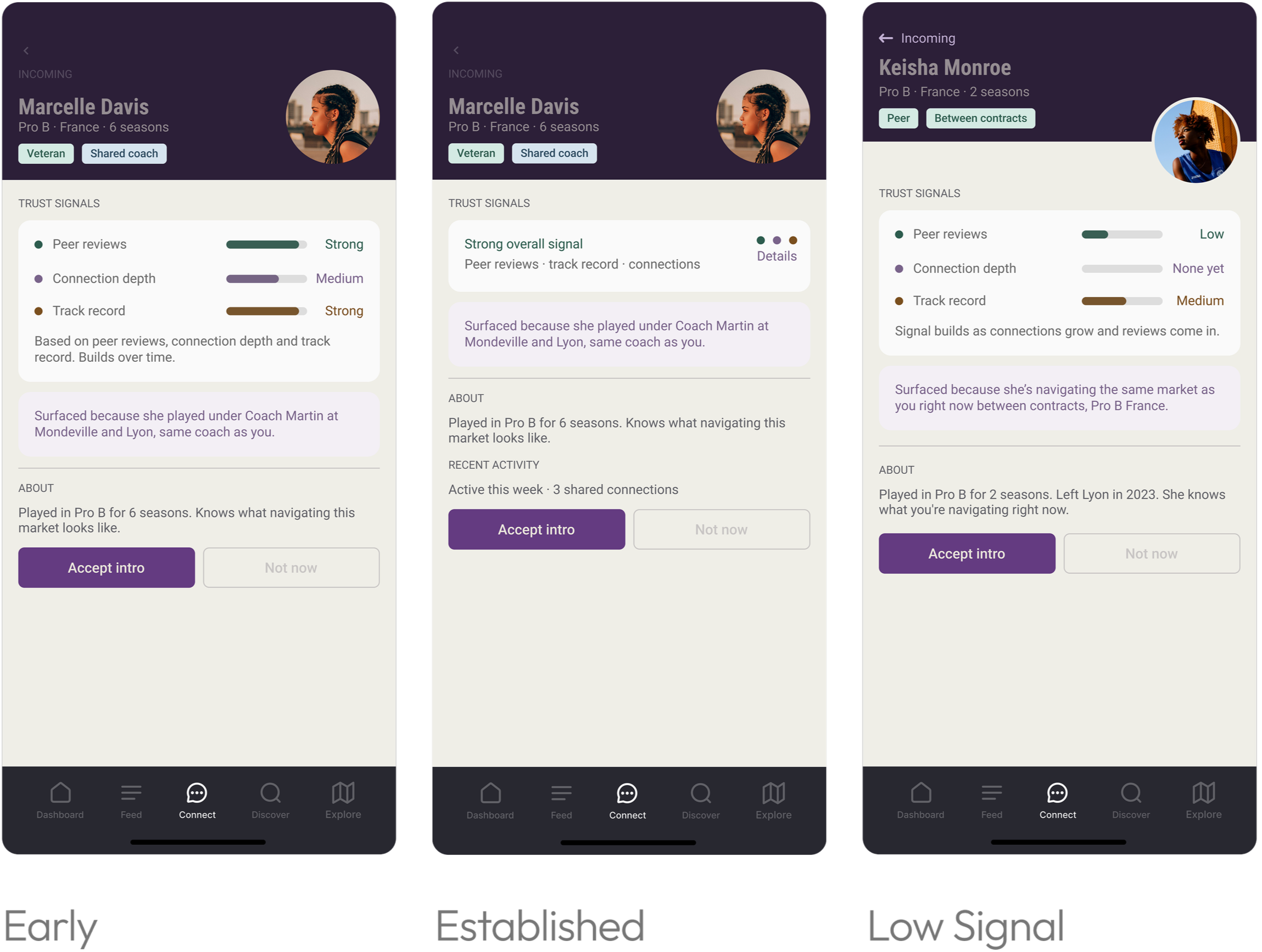

The research made the case against verified badges.

Here’s what the data supported instead.

Insight 78% of athletes named peer reviews as their primary trust signal. Background checks registered at 4.3%. Verified badges don't build trust, peers do.

Design A composite of three signals: peer reviews, connection depth and track record. Visible on the profile before she decides whether to engage.

Three states designed for different moments:

Early Three labeled bars show the system's work while trust is still being established

Established Condenses to three dots with a summary line, less scaffolding, same signals

Low signal Shows honestly what's known and what isn't. Bars appear at Low or “None yet"

Impact An incomplete signal is more trustworthy than a fabricated one. The same principle that governs onboarding governs this, graduated autonomy applied to transparency.

Career-level decisions deserve a deliberate commit moment. Auto-save isn’t enough.

Save button Career-level decisions warrant a deliberate commit moment. One Save button throughout the status sheet. Not auto-save.

Still True check-in Pre-populated confirms, not blank fields. Re-entering context she already shared signals the platform doesn't know her. Confirming builds trust before asking anything new.

Gabby shows up. The system already knows.

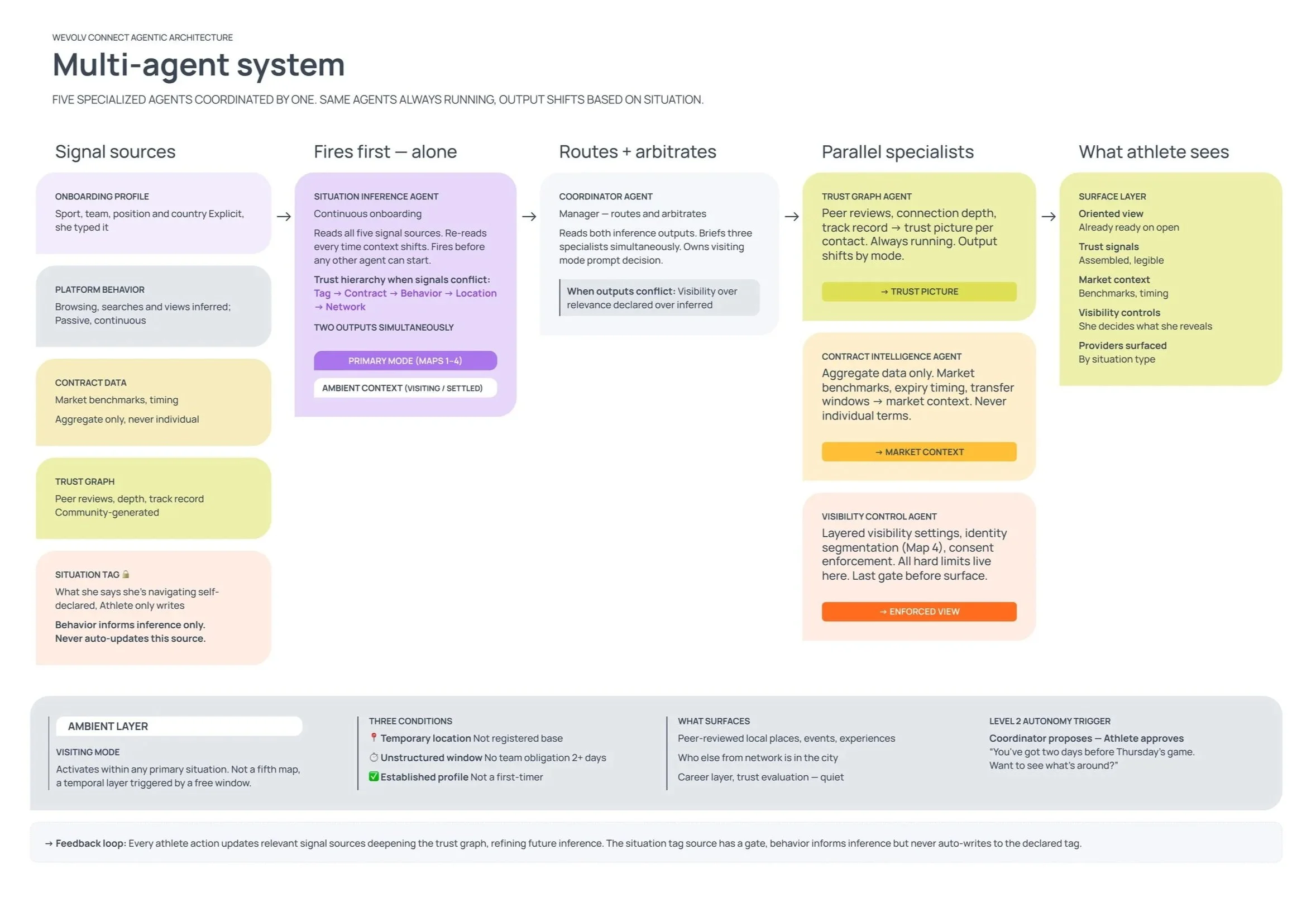

Five agents handle the logic, each with a defined job, defined inputs, defined outputs. The situation inference agent fires first and alone. The same system reads the situation differently every time depending on where the athlete actually is right now.

One rule governs all conflicts. What she says she's navigating overrides what the system infers. If there's a conflict, her declaration wins.

A system that updates her situation without asking isn't a partner. It's a manager.

Four athlete situations. Four different system responses. Each map shows what the system knows before the app opens, what it surfaces inside the app and what the athlete decides. Same logic layer, different output every time based on situation, not career stage.

How context moves through the system before Gabby opens the app.

The findings that mattered most were the ones that broke the hypothesis.

Every decision in this system traces back to how athletes actually build trust, not how a platform assumes they do. The research set the bar. If a decision didn't trace back to how athletes actually behave, it didn't belong in the design.

The research behind these decisions is documented in the WEVOLV research case study.